October 15, 2018 – Wearable technology developed at Dartmouth College with the potential to change the way we live and work will be introduced at the 31st ACM User Interface Software and Technology Symposium (UIST 2018).

The devices to be presented by Dartmouth include a battery-free energy harvester and a novel conductive system for smartwatches. The research projects, from Dartmouth’s DartNets and XDiscovery labs, demonstrate the innovative thinking and technical skills essential for developing the next generation of “smart” wearable devices.

Image  |

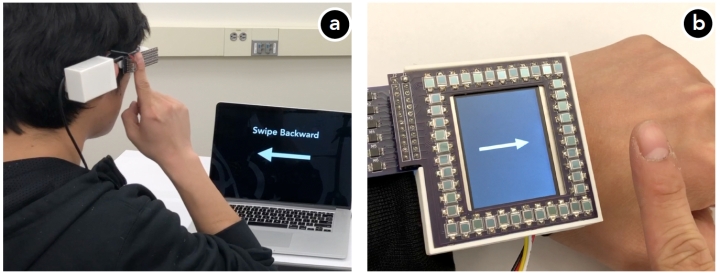

| Integrating the gesture recognition prototype within (a) an eyeglass frame and (b) a smartwatch. Photo credit: DartNets Lab. |

Self-powered gesture recognition

Finger gestures facilitate our interaction with small devices. However, gesture recognition can consume a relatively large amount of power. As technology developers look to produce smaller devices that require little or no battery power – like phones, cameras and other displays – Dartmouth’s self-powered gesture recognition system uses ambient light to power wearables and to operate for “always-on” recognition of inputs without the need for batteries. Through the use of a new algorithm, the system can even overcome the technical challenge presented by unpredictable light conditions. For more information, please see https://dartgo.org/gestures

Image  |

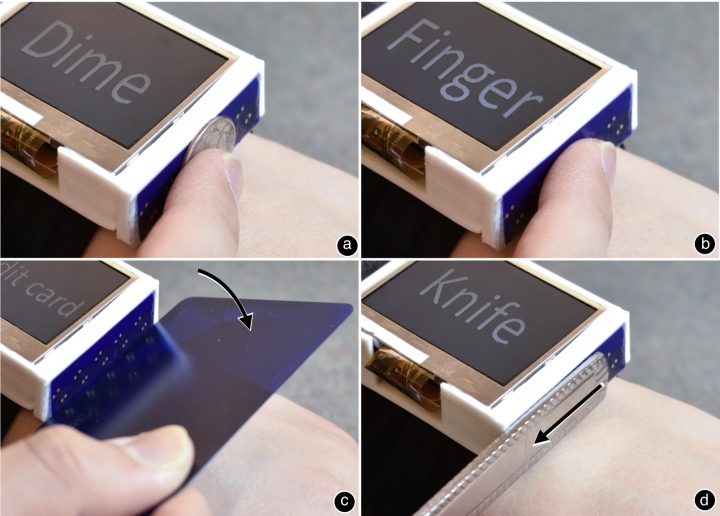

| Conductive objects input commands to a smartwatch including (a) coin, (b) finger, (c) credit card, and (d) knife. Photo credit: Jun Gong. |

Indutivo: Using inductive sensing to interact with wearables

As wearable technology becomes smaller, it can become even more difficult to interact with the devices. Indutivo solves this problem by turning just about any conductive object into an input device for smartwatches. By sliding, rotating and hinging objects against an enabled smartwatch, this new technology opens up a new world of input possibilities through the use of common objects like paperclips or keys.

For a project video, please see https://dartgo.org/Indutivo

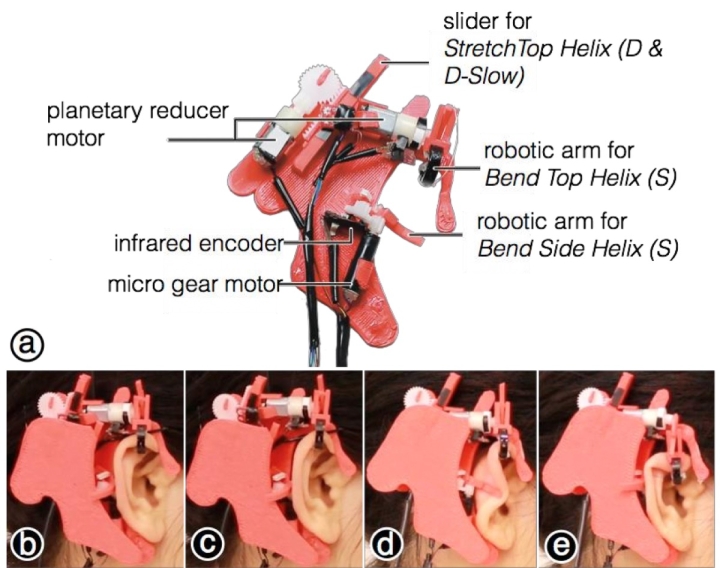

Image  |

| Orecchio prototype fixed to the ear’s auricle with (a) normal shape, (b) top helix stretched, (c) side helix bent, and (d) top helix bent – all showing different emotions. Photo credit: Da-Yuan Huang. |

Orecchio: Extending body language to the human ear

While body language is an effective tool for human communications, it can have limitations. Orecchio expands the possibilities for communicating with others by employing the human ear to display emotions like happiness, boredom and excitement. The device works by activating the ear’s auricle – the visible part of the ear – with a wearable device composed of miniature motors, custom-made robotic arms and other electronic components. Orecchio’s developers at Dartmouth say the prototype is being greeted by testers as “a welcome addition to the vocabulary of human body language.”

For a project video, please see https://dartgo.org/Orecchio

UIST takes place from October 14 through October 17 in Berlin, Germany.

Dartmouth’s DartNets lab is co-directed by associate professor of computer science, Xia Zhou. The XDiscovery lab is led by assistant professor of computer science, Xing-Dong Yang.

###

Editor’s Notes:

The first author for the research paper on Indutivo is Jun Gong, PhD student at Dartmouth College. The paper is available at: https://www.cs.dartmouth.edu/~xingdong/papers/Indutivo.pdf

The first author for the research paper on Orecchio is Da-Yuan Huang, assistant professor at National Chiao Tung University. The paper is available at: https://www.cs.dartmouth.edu/~xingdong/papers/Orecchio.pdf

The co-primary authors for the research paper on self-powered gesture recognition is Yichen Li, post-doctoral researcher, and Tianxing Li, a PhD student; both are at Dartmouth College. The paper is available at: https://home.cs.dartmouth.edu/~xia/papers/uist18-selfpower.pdf

XDiscovery Lab’s Xing-Dong Yang may be reached at: xing-dong.yang@dartmouth.edu

DartNets Lan’s Xia Zhou may be reached at: Xia.Zhou@dartmouth.edu