Cal Newport ’04 is not worried about ChatGPT taking over the world or destroying jobs.

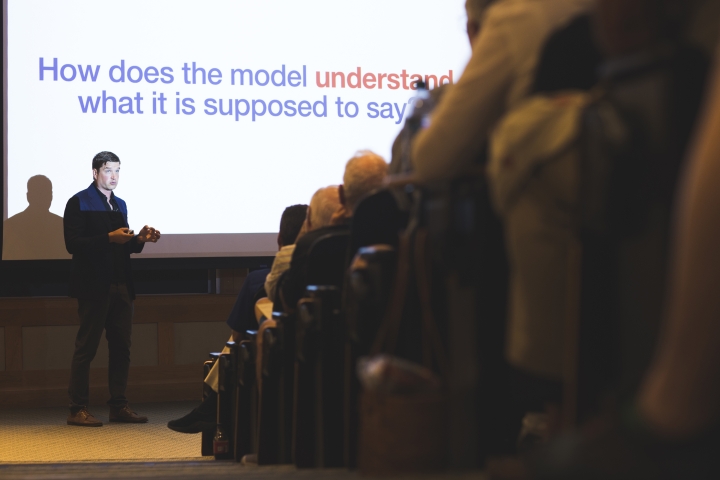

But he does see artificial intelligence systems changing workplaces and academia, topics he addressed before a crowd of about 150 people in Filene Auditorium on Wednesday.

“It’s not that we should let our guard down,” the Montgomery Fellow and visiting faculty member in the Department of Computer Science said. “But our cortisol level can decrease just a little.”

Newport, an associate professor of computer science at Georgetown University, also writes articles for The New Yorker and best-selling books on culture and technology.

A former columnist for The Dartmouth and editor of The Dartmouth Jack-o-Lantern humor magazine, he credits Dartmouth’s commitment to liberal arts with his ability to explore technical issues in an approachable way.

“At Dartmouth, this idea that you could be studying world-class science and at the same time embrace the arts … that was actually quite common,” Newport said.

Jacob H. Strauss 1922 Professor of Music Steve Swayne, the director of the Montgomery Fellows Program, says Dartmouth is pleased to have Newport, an alumnus expert in his field, on campus this summer.

“Cal’s return shows our faculty that what we do matter and provides our students with a concrete example of how they can be agents of change in the world,” Swayne says.

Newport began his speech by explaining how an AI system like ChatGPT responds to questions with human-sounding answers. The AI chatbot is not “thinking” about the answer, or even the question, when it’s fed a prompt.

“And this is where it’s sometimes surprising for people,” Newport said. “What’s it going to spit out? It’s just a single word.”

Systems like ChatGPT predict what the next word will be through probability of what it’s seen before, as well as cues from the prompt it’s been given and grammar rules. Then it repeats the entire process to find the next word.

It’s like a near-sighted frog that only looks one lily pad ahead. Systems like ChatGPT don’t have a memory of the past or a destination in mind. They’re just searching for the next place to hop.

But how do advanced chatbots sound so composed, Newport asked? It’s not a new mechanism. The answer is scale.

“You could fill an entire Baker-Berry Library full of books just describing different patterns it might recognize and different chains of logic it might use … and still have way more books than you had room to actually put into it,” Newport said. “This incomprehensible scale is part of what allows these models to be so impressive.”

Despite that, AI systems are not alive in any sense of the word. They have no ability to reflect or change. With multiple processors grinding out word choices simultaneously, there’s not even a single intelligence to worry about, he said. All they do is pick out one word, over and over.

“They will not accidentally become self-aware,” Newport assured the audience. Maybe someday a system like ChatGPT could be used as part of a true AI—and tech leaders have expressed concerns about that possibility—but that’s not what we have today.

“It’s not going to fire the missiles,” Newport said. “It is going to introduce more bad behavior,” such as misinformation or hard-to-detect scams. However, it’s unclear if AI systems will make these already-existing problems significantly worse.

Newport acknowledged that some might consider his position to be “Pollyannaish,” but he also said, “I’m confident in that position because I’ve spent a lot of time thinking and dealing with the actual technology.”

Currently, Newport is working on an article about how AI will affect jobs. Though he’s seen a lot of headlines prophesying doom and millions thrown out of work, Newport isn’t as concerned.

While AI is good at, for example, summarizing an email chain and coordinating a time to meet up, it can’t organize a conference, Newport explained. AIs lack nuance and specialized information.

“Most knowledge work jobs are not built around generating text on general topics,” Newport said.

Microsoft, which is a big investor in OpenAI, the company that makes ChatGPT, is testing plug-ins to its software products to help with common office tasks. Newport predicted the impact of tools like this will be similar to when email and the Internet entered the workplace.

“So the most likely future is not that these productivity-enhanced language models are going to eliminate massive numbers of jobs so much as they will change them,” Newport said.

Those changes are already being felt on campuses like Dartmouth’s, as academics at various institutions weigh banning AIs versus allowing them as tools. Newport admitted there weren’t good answers yet, but his service on a Georgetown task force examining the issue has led him to lean toward seeing AI more as a tool and less as a threat.

Newport recalled how disruptive the internet was for colleges and universities. Professors had to adjust their teaching in a world where almost all information was available—including last year’s exams.

On the bright side, Newport pointed out that AIs were very good at explaining subjects like math, and students could ask the digital tutors questions.

“We are going to be using it, not trying to ban it,” he said.

After the talk, Zhuoya Zhang, a PhD student in the Quantitative Biomedical Sciences Program, said she had read Newport’s book Digital Minimalism: Choosing a Focused Life in a Noisy World during the pandemic, and it “completely changed my relationship with social media.”

“I learned to be more intentional about when and why I use it. It was wonderful meeting him and saying thank you in person,” Zhang said. “I truly enjoyed his speech. Newport demystified large language models like ChatGPT and offered his candid opinions on the risks and opportunities brought by this technology.”

Isabel Macht, who will be a high school senior next year at Fryeburg Academy in Maine, was visiting her father in the area and also decided to attend the talk. She’d heard about ChatGPT, but mostly from teachers warning her not to use it.

Macht had been worried about how to prepare for AI job disruptions, but she came away more hopeful after Newport’s talk.

“It did help with the idea of, OK, just continue with your path and transform your life with AI as it comes at you,” she said.

Newport is teaching a computer science course, Writing About Technology, this summer and also has had other talks on campus, including one at the Rockefeller Center in late June on rethinking work in the age of distraction.