New technology that allows airborne drones to locate aquatic robots is taking off at Dartmouth.

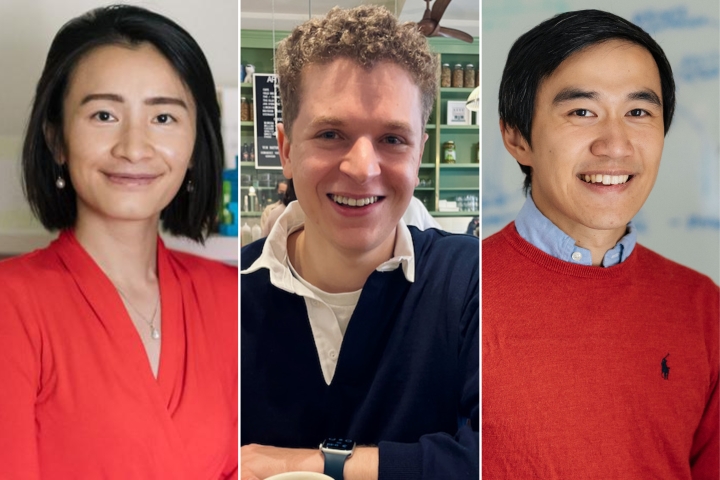

Researchers from the HealthX and Reality and Robotics Labs, led by computer scientists Xia Zhou and Alberto Quattrini Li, created Sunflower, an aerial sensing system that uses laser light to peer past the water’s surface and detect robots underwater.

Their work was presented on Tuesday at the ongoing conference on Mobile Systems, Applications, and Services (MobiSys) 2022.

From monitoring marine and freshwater environments to charting out previously unexplored parts of the ocean, aquatic robots are primed to do a lot. To tap into their tremendous potential, a crucial requirement is for the robots to know where they are as they navigate the depths of the ocean or other bodies of water. But GPS systems use radio signals, which don’t travel very far in the water.

Until now, aquatic robots have used acoustic signals to find their way around without getting lost. They communicate with a central system that is mounted on a boat—an expensive affair with a lot of logistical overhead.

“It is not conducive to making the use of this technology more widespread,” says Quattrini Li, who specializes in building low-cost, autonomous robots, often swarms of them, to explore challenging environments.

When Zhou, an expert in using light for wireless communication and sensing, learned about the challenge of keeping track of robots in the water, she was immediately interested. “We realized that light was the perfect medium in the underwater context,” says Zhou.

Working with Quattrini Li’s team, Zhou and her students set out to build a light-based system that can advance the potential use of aquatic robots.

The researchers mounted a drone, which they refer to as the queen, with a laser that would scan the water with a beam. “The queen has quite a bit of stuff packed into a pretty small packet,” says Charles Carver, Guarini ’22, lead author of the paper. Along with the laser transmitter is a steering mechanism that uses mirrors to sweep the laser beam across a wide angle.

The robots, referred to as workers, are equipped with reflectors that bounce the light back to the drone with some key information: their depth and the angle at which the laser light hit them. With this data, the queen can compute the position of each robot, says Carver.

One key issue was the low intensity of the light that came back to the drone. It was too low for a light sensor to capture. The researchers came up with a novel deign for a ring made of optical fibers that could cumulatively collect enough light, which they focused onto a super sensitive sensor placed at their center. This flower-like ring inspired the system’s name.

“Our electronics had to be lightweight and relatively low power. We also had to come up with engineering solutions to contend with waves that can displace the robots and ambient light that can obscure our signal,” says Zhou.

The researchers tested their system in a swimming pool where different wind and wave conditions were simulated. The queen could pinpoint the robots’ positions with centimeter-level accuracy. That’s pretty good in comparison to the state-of-the-art, the researchers say. Carver is optimistic about the system’s performance in real-world settings.

Quattrini Li is excited about the slew of possibilities the technology opens up for the future of environmental monitoring and underwater exploration. Guided by drones, the robots can venture into uncharted waters.